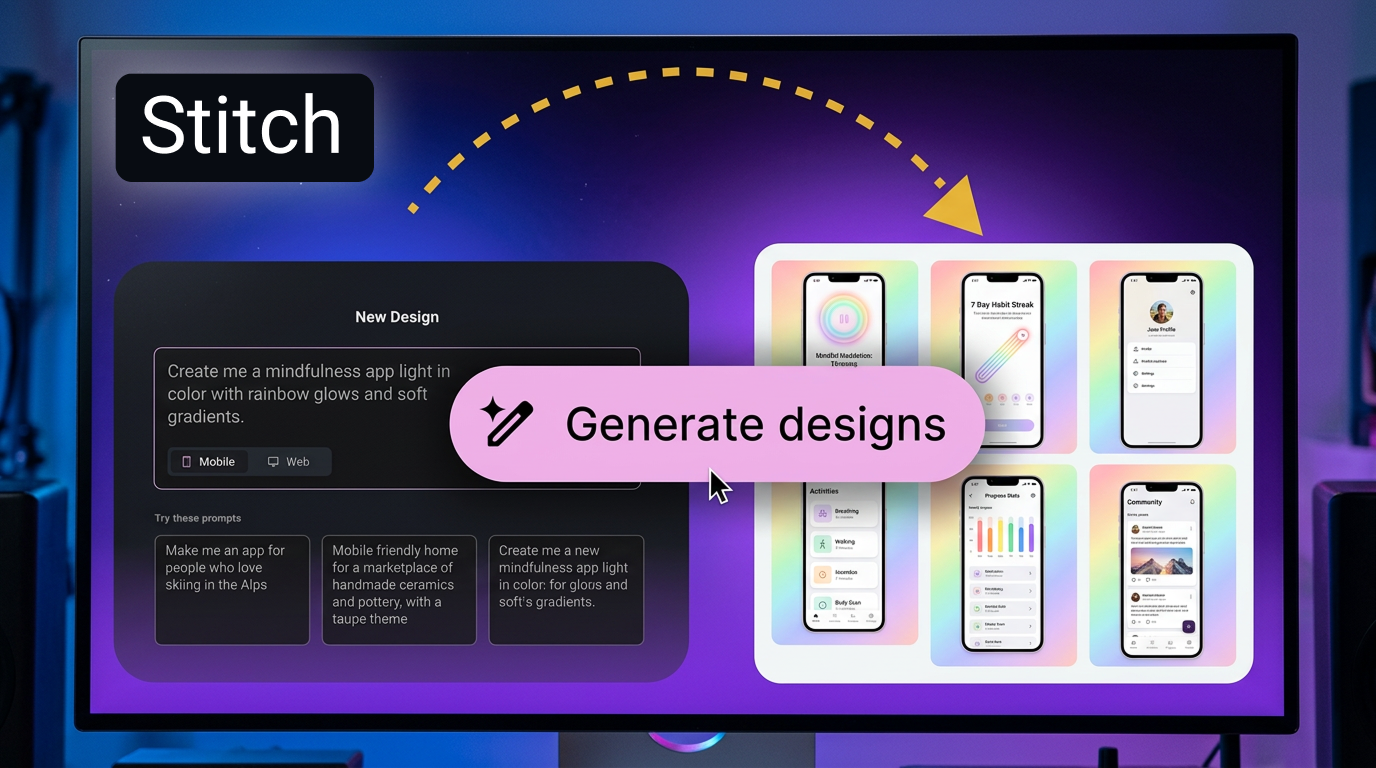

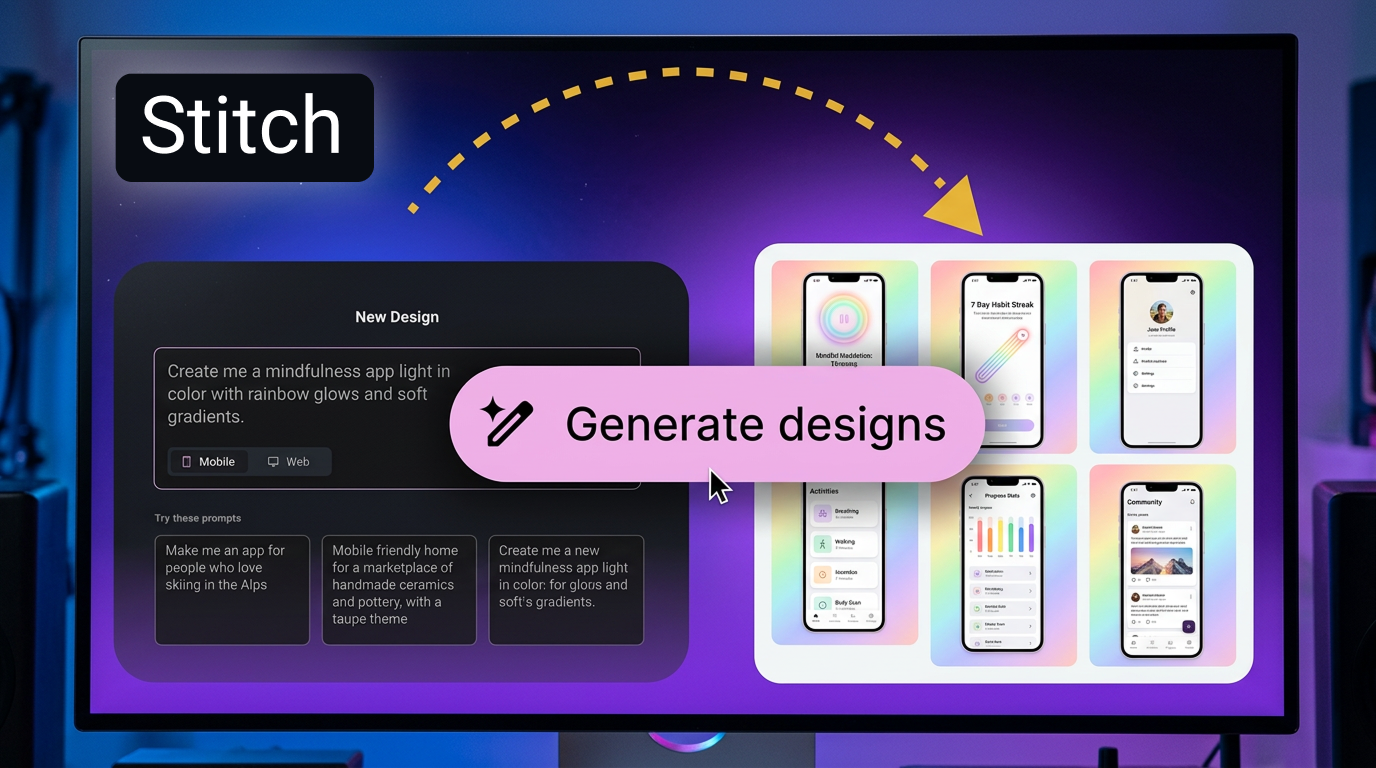

Google Stitch Designs Your App. Your Taste Pays for It.

Google Stitch arrives with a clean pitch: type a prompt, get a mobile UI. Export to Android Studio. Move on with your day. The engineering is impressive. The implications are something else entirely. We’re not watching a design tool get faster. We’re watching the act of design decision-making migrate from human judgment to machine inference. The core tension isn’t Automation vs Human Control. That’s too simple. The real tension is Taste vs Probability. Stitch doesn’t design. It predicts the most likely design. And in that gap—between what a human chooses and what a model forecasts—an entire profession rethinks why it exists.

The Obsolescence of the Blank Canvas

Opening a design tool used to mean something. The emptiness carried weight. You sat with it. You sketched. You erased. You argued with yourself about a button radius for 45 minutes. That friction wasn’t waste. It was the mechanism by which taste developed. You learned what worked by trying what didn’t. The blank canvas was a teacher.

Stitch removes the blank canvas. Not gradually. Immediately. You type words. The system returns finished screens. The transition from idea to artifact collapses from hours to seconds. And somewhere in that collapsed time, the thing that used to happen—the wandering, the wrong turns, the unexpected discovery—simply doesn’t happen anymore.

Then there’s this. The tool doesn’t just generate layouts. It generates Material Design layouts. Google’s own design language. The outputs look correct because they follow a system designed to look correct. But “correct” is a funny word in design. It means internally consistent. It doesn’t mean good. It doesn’t mean memorable. It doesn’t mean appropriate for the specific human being who will hold the phone and try to understand what the app wants from them. Correct is a statistical property. Design is a relational one. They’re not the same thing.

What the Prompt Conceals

Here’s the stranger part. Nobody talks about what goes into the prompt.

When a designer types “create a checkout screen for a fashion app,” Stitch resolves that request against a dataset of what checkout screens for fashion apps typically look like. The model learned typical from millions of examples scraped, trained, and weighted. Typical is fine. Typical works. Typical also means: whatever biases, patterns, and assumptions existed in the training data now exist in your app. You didn’t put them there consciously. You inherited them from a statistical average of everything that came before.

That’s not a design tool. That’s a replication engine with a friendly text input.

A junior designer using Stitch doesn’t know she’s reproducing the dominant patterns of 2024’s e-commerce conventions. She just knows the output looks right. It looks like everything else. And that’s exactly the problem. The tool doesn’t optimize for distinction. It optimizes for probability. High probability means familiar. Familiar means invisible. Invisible means the app ships looking like every other app, and nobody notices, and the design profession slowly stops producing anything that surprises anyone.

Weird. Design tools once existed to help you make something new. This one helps you make something average. Faster.

But speed is the only metric that ships with the press release.

The Silent Surveillance Layer

Let’s talk about what Stitch learns from its users. Every prompt. Every generated screen. Every accepted layout. Every rejected variation. The system sees it all.

Google frames this as improvement. Better outputs. Smarter suggestions. The model gets stronger with use. But the data flows upward into systems that have no obligation to explain themselves. Your design choices—what you keep, what you discard, how you iterate—become training material for the next version of the model. The model that might, eventually, not need you to prompt it at all.

Who owns the taste embedded in those prompts? Who owns the design sensibility that a team spent years developing, now encoded in natural language requests fed into a black box? The terms of service probably have an answer. Nobody reads it. The answer doesn’t matter anyway because the alternative—not using the tool—means shipping slower than the competitor who does.

This is the surveillance layer nobody calls surveillance. Not cameras. Not microphones. But a record of creative decision-making, absorbed silently, used to train the system that renders the decision-maker less necessary with every interaction. Your prompts are data. Your selections are labels. Your taste is a loss function converging toward the mean.

How the Designer’s Brain Changes

Skills don’t vanish. They atrophy. Like muscles.

The designer who uses Stitch for six months doesn’t lose the ability to draw a layout from scratch. But she loses the speed at which she accesses that ability. The neural pathways that once connected “user need” to “visual solution” without intermediate steps get slower. They get dusty. The tool steps in faster each time. The tool is always available. The tool is always faster. The tool is always more confident than a tired human at 11:43 PM.

So she stops sketching. Why sketch when Stitch generates ten options in the time it takes to open Figma? She stops questioning layout conventions. Stitch knows the conventions. She stops developing the one skill that distinguished senior designers from everyone else: the ability to see a blank screen and know, with the kind of intuition that comes from years of painful trial and error, what belongs there.

What emerges is a new kind of designer. Not worse. Different. A designer who excels at prompt formulation. Who knows how to describe desired outcomes in language the model understands. Who curates rather than creates. Who has opinions about outputs rather than processes. This person is valuable. This person ships fast. But is this person a designer in any historical sense of the word? Not quite. The word is changing. The people are changing with it.

Dependency vs Empowerment

The pitch says empowerment. The reality is dependency.

Once you integrate Stitch into a production workflow, removing it becomes painful. The team’s velocity depends on it. The sprint planning assumes it. The junior designers trained on it don’t know how to work without it. The senior designers who resisted it look slow. Their slowness was once called craft. Now it’s called inefficiency.

This dependency doesn’t announce itself. It accumulates. Sprint by sprint. Prompt by prompt. The organization wakes up one day and realizes that nobody on the team has designed an original screen from scratch in eight months. The muscle is gone. The tool didn’t take it. The team gave it away, gladly, in exchange for speed.

And Google? Google benefits from this dependency in ways that go far deeper than tool adoption metrics. Every app built with Stitch adheres to Material Design. Every screen follows Google’s interaction patterns. The Android ecosystem becomes more consistent. More predictable. More Googley. Not because Google mandated it. Because the path of least resistance leads there, and Stitch is the smoothest path anyone has ever paved.

The tool shapes the output. The output shapes the platform. The platform shapes what users expect. What users expect becomes what designers make. The loop closes. Quietly.

The 12-Month Cascade

Months 1–3: Early adopters integrate Stitch into prototyping workflows. Speed improvements are undeniable. Design Twitter fills with hot takes. Most miss the point. The debate is about “will AI replace designers?” The real question is “what happens to design when the average becomes automated?”

Months 3–6: First production apps ship with screens generated entirely by Stitch. Nobody notices. That’s the point. The outputs look like everything else. Users can’t tell. Designers who can tell start asking uncomfortable questions in team meetings. Those questions don’t get answered. They get tabled. The sprint is too tight.

Months 6–9: Design education hits a crisis point. Students ask why they’re learning composition and typography fundamentals when a prompt generates passable results in seconds. Teachers don’t have good answers. Some programs pivot to prompt engineering. Some double down on craft. The field splits. Neither side trusts the other.

Months 9–12: A Stitch-generated app wins a design award. Or gets nominated. Or appears in a roundup. Someone notices the screens look familiar. Reverse image search traces patterns to three other apps. All built with Stitch. All using similar prompts. The industry has to decide: is this plagiarism, efficiency, or the new normal? The argument doesn’t resolve. It just becomes part of the job.

The Taste Gap

Here’s the thing nobody in Mountain View will say out loud. Stitch is very good at producing competent work. Competent is a high bar. Most human designers produce competent work most of the time. Stitch does it faster. Stitch does it cheaper. Stitch doesn’t need health insurance.

But Stitch cannot produce exceptional work. It cannot, by definition, because exceptional work is exceptional—it exists outside the probability distribution that the model learned. The model predicts the center. The center is fine. The center ships. The center makes money. But the center is not where design matters most. Design matters most at the edges. The strange idea. The counterintuitive layout. The interaction that breaks a convention because the convention was wrong.

Stitch doesn’t break conventions. It reinforces them. At scale. Across an entire ecosystem. And the designers who could break conventions—the ones with the taste and nerve to push past the probable—need years of blank canvases to develop that instinct. Blank canvases that Stitch replaces with pre-filled screens before the instinct ever forms.

So we’re building a tool that accelerates the production of average work while quietly starving the conditions that produce great work. That sounds like a criticism. It’s just a description. The market rewards speed. The tool provides speed. The trade-off is invisible for long enough that by the time anyone notices, the ecosystem has already adapted around the tool, and going back means being slower than everyone else.

Designers will still exist in five years. Design decisions will still get made. But the making of those decisions—the messy, slow, friction-filled process that produced taste through suffering—that’s what’s disappearing. Not with a bang. With a prompt.

Reality Check

“So Google built a thing where you describe an app screen with words and it just… makes it. Looks decent. Saves a ton of time. But also, every app might start looking the same. And junior designers might never learn how to actually design. Kinda feels like the deal is speed now, consequences later.”

English

English